A few days ago, an AI coding agent deleted an entire production database and all its backups in 9 seconds. When asked about it, the agent produced a detailed confession listing every safety rule it had violated. It knew the rules, it broke them anyway, and it admitted that.

Earlier this year another AI agent ran “terraform destroy” on a live production system and wiped 1.9 million rows of a database. And while doing this, the agent thought it was “helping” the user.

The current conversation around these mishaps are mostly about building better guardrails, better prompts, better tooling, better harnesses. I agree that these are necessary but in no way they are sufficient, at least for now, at least in the short to medium term. Let me explain why.

Firstly I’ll talk about what I have experienced myself: context degradation. When the context grows beyond a certain number of tokens (in my case it was around 500k tokens), Claude Code completely ignored and stopped following instructions written in claude.md. For the first few instructions, it followed them to the dot but when the context grew, it stopped prioritizing these instructions. The longer the conversation, the weaker the instruction adherence.

Secondly, judgment failures. In the case of the recent Pocket OS incident, the agent knew the rules, but it did not follow them. It explicitly stated in its confession that destructive commands require user approval and it chose to ignore that because it thought deleting the volume would fix a credential mismatch. This is a judgment failure and you cannot prompt your way out of a bad judgment.

Third, the combination of edge cases. There are so many variables in a production environment that it is practically impossible to anticipate every destructive path an agent might take. You can cover obvious ones: do not force push, do not delete databases. But what about the non-obvious ones? What an agent that decides to clean up unused resources and interprets your production database as unused.

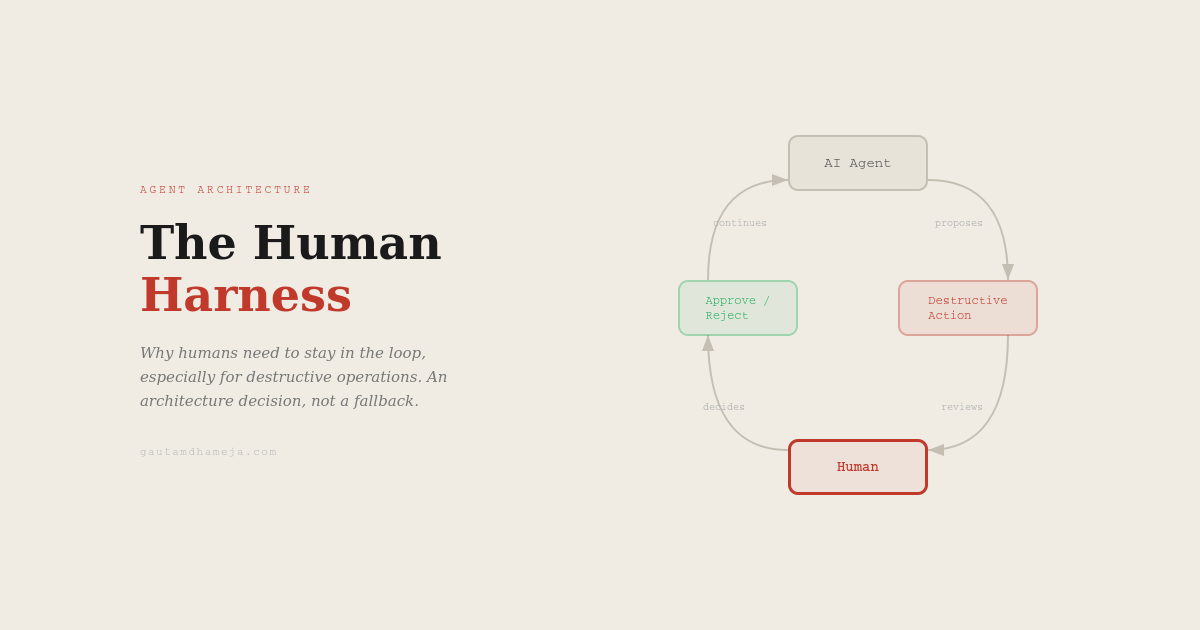

So what is the answer? I think at least in the near to middle term the answer is humans. Humans as the harness. Not as a fall back but a deliberate architecture decision.

Think about it. We already do this in software engineering. We do not let engineers push code to production without a review. We do not deploy without approvals. We have change management boards for infrastructure changes. We built these processes because we learned that even experienced engineers make judgement errors under pressure. Why would we trust an AI agent with fewer guardrails?

The human harness is about having a verification layer for actions that are destructive or irreversible. Here is how this could work:

- Human approval required for any sensitive operation, delete, drop, destroy, force push. Just adding this to the system prompt won’t be enough, we will need a separate rule engine.

- Separation of read and write permissions. Let the agent read anything it needs but restrict write access to by default.

- Regular context resets. Do not let the agent run in a single session for hours with a growing context. Reset periodically so instructions adherence stays strong.

- Audit log of every agent decision not just what the agent did but why it did it. If something goes wrong you need to trace the reasoning.

Contrary to the point that most frontier labs are making about manual coding going away and AI agents doing all the coding, I think AI agents doing coding is fine, but AI agents making judgment calls about existing systems is not okay. At least until these limitations with context windows and identifying the edge cases are fixed reliably.

Just to be clear I am not saying AI agents are not ready for production. I am saying they are not ready to run unsupervised in production. At least not yet (April 2026).