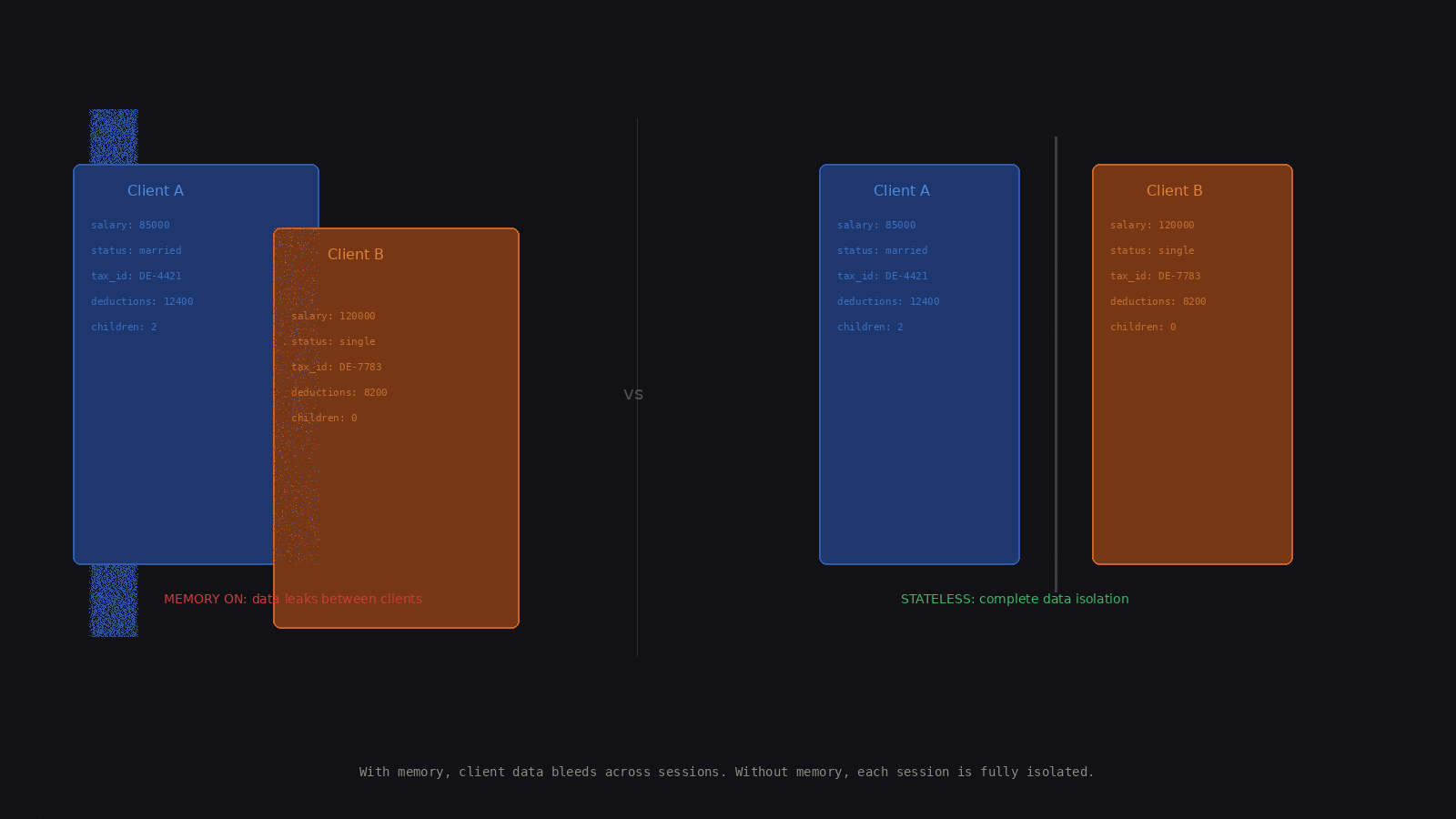

I was building an AI agent for a professional services use case for a customer, and the natural instinct was to add memory so that the agent could remember previous interactions, build context over time, and learn from the past queries and responses. Then I realized this agent handles multiple customers. If it remembers client A’s details and that context bleeds into client B’s details, then it’s not just a bug; it’s a compliance violation. It could also be a confidentiality violation. Then I got to thinking: do all AI agents need memory?

Agentic Memory Link to heading

Let’s first understand what agentic memory is. Agentic memory is any mechanism that allows an AI agent to retain and reuse information beyond the current request. This can take several forms: conversation history, a vector store memory, a shared state, a database or tool based retrieval. Most agentic frameworks, like LangChain, CrewAI, and AutoGen, include memory as a near-default component. The assumption in most tutorials is that memory makes agents smarter, and often it does, but smarter and safer are not the same things.

Memory As Liability Link to heading

Memory can become a liability for your agent. In my current use case, each customer’s data must be completely isolated. The agent should have zero knowledge of any other client when processing a query for one client. Memory here does not enhance the agent; it creates a cross-contamination vector. Some of the similar use cases are:

- Medical, patient data isolation

- Legal, attorney-client privilege

- Financial advisory, Chinese walls between clients

- Multi-tenant SaaS, data boundaries There are several real production use cases where you need absolute isolation of customer data, and a small compromise can become a big compliance issue.

LangChain also acknowledges this concern. In LangMem, memories use namespaces, typically based on user_id. Namespacing memory could be a mitigation, but it is not a solution. If the stakes are high enough (medical, legal, etc.), then the safest architecture could be stateless, not trusting a namespace boundary.

Stateless Agents Link to heading

So when agents do not have memory, what do they look like? They are stateless agents, and there is a clear need for them, as explained above for the use cases mentioned. Basically, every session starts fresh. Context is injected explicitly per request, and it is not accumulated over time. The agent knows only what you hand it right now, in that moment, during an interaction. This is not a limitation; this is a design choice. It’s the same principle as stateless microservices. Each request carries everything it needs, and the system is easier to reason about, test, audit, and scale.

The Decision Framework Link to heading

Use Memory: For projects like personal assistants, creative tools, long-running research tasks, and single-user applications where continuity improves the experience. That’s where we would need agentic memory, because without it the system loses continuity between sessions.

Go Stateless: For projects like multi-tenant systems, where data isolation is a must, regulated domains, professional services, and any system where an auditor might ask, “Why did the agent know this?” If there is any chance of legal liability, compliance violations, or trust erosion, go stateless.

Memory for agents is an architecture decision strictly based on the use case and the design. It is not a default. The frameworks make it easy to add because in some use cases it genuinely adds a lot of value, but the hard part is knowing when not to add memory to an agent. When you are building agents for enterprise use cases, the question isn’t “How do I add memory?” It should be “Should this agent remember anything at all?”

PS: This post was first published as an X article here.