Many a times we come across situations when we need to execute long running tasks for APIs exposed by our backend services. To make sure that the user experience is not impacted and the requests are not blocked, it is a good practice to execute these long running tasks asynchronously. Here’s how we built an asynchronous web API using Azure Service Fabric stateful micro-services.

Problem Statement Link to heading

How to handle HTTP PUT/POST/DELETE requests involving long running operations in the backend, without making the user wait for a long time? For example — In an IoT system, we had the Web API exposing a delete operation on a user, in which all devices related to this user should also be deleted. This makes this whole operation a long running process and we didn’t want to make the end user wait this long. So, we implemented a (kind of) asynchronous Web API.

Our Solution Link to heading

Instead of a simple HTTP request-response mechanism, we made it a 4 step process, like:

1. HTTP PUT/POST/DELETE request (long running operation)

2. Accepted/Rejected (bad request) response with request Id

3. HTTP GET status request with request Id

4. Response

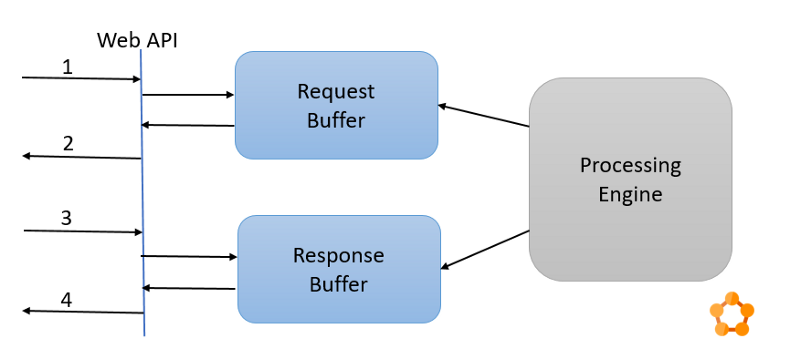

This solution is pretty common these days. Basically, it says that we accept the initial (long running operation) request and return a unique identifier for it in the response. Then the user sends a GET request on another API endpoint with this identifier and the final response to the initial request is provided. The diagram below shows these steps 1 through 4.

Note that we have not used this solution for GET requests. The reason being — sending a GET request twice was a bit confusing for the user. Instead we implemented response paging for GET requests returning large amount of data.

Implementation Link to heading

To implement this, we needed a reliable storage to hold/save the incoming requests and corresponding responses. There would be several instances/nodes running for each of these components and they would be accessing this storage simultaneously for faster processing of request.

To reduce our effort in implementing and maintaining these infrastructure/platform requirements, we chose Microsoft Azure Service Fabric stateful services. They have inbuilt support for all of these requirements — reliable storage, faster retrieval of data, multi instance support.

Following are the different components we used for implementation of this solution. The diagram below shows how these components communicate with each other.

Web API Link to heading

We exposed the API using an ASP .Net Web API hosted on a Service Fabric stateless micro-service. This is where we also did input validations on the incoming requests.

Request Buffer Link to heading

A stateful micro-service having a reliable dictionary(requestId, request) for storing incoming requests. Every time the Web API was called with a PUT/POST/DELETE request, after validation, this request was sent to the request buffer service for saving it into the reliable dictionary. Once the request was saved, we returned an “Accepted” response to the user, through the Web API.

Processing Engine Link to heading

Now that there is a request in the request buffer, we need to process it. We used a simple 1:1 listener on the reliable dictionary inside the request buffer service and every time we received a request in this listener, we passed it to the downstream micro-services for processing. We used the Saga pattern for workflow and failure management during this processing.

Response Buffer Link to heading

Once the request was processed by the system, the response was then written into another reliable collection inside another stateful micro-service which we called the Response Buffer. This was then exposed using the Web API as a status endpoint. For the request Id of the initial request, we saved the response in this reliable collection. When the GET request came for the response, we queried this collection using the request Id and returned the response, if it was there.

There are several other solutions to the long running HTTP request problem — another one that we considered was web hooks, but we went ahead with this one (described above).